Geospatial Progress: Modernizing QGIS, Simplifying Satellite AI with Alpha Earth, and Scaling Google Earth for Business

There are always great things happening in the geospatial ecosystem, but this past week brought a few standout announcements that are worth highlighting. Rather than a sudden shift, these updates represent practical, steady progress across different corners of the industry, from open-source desktop software to AI data processing and enterprise mapping tools.

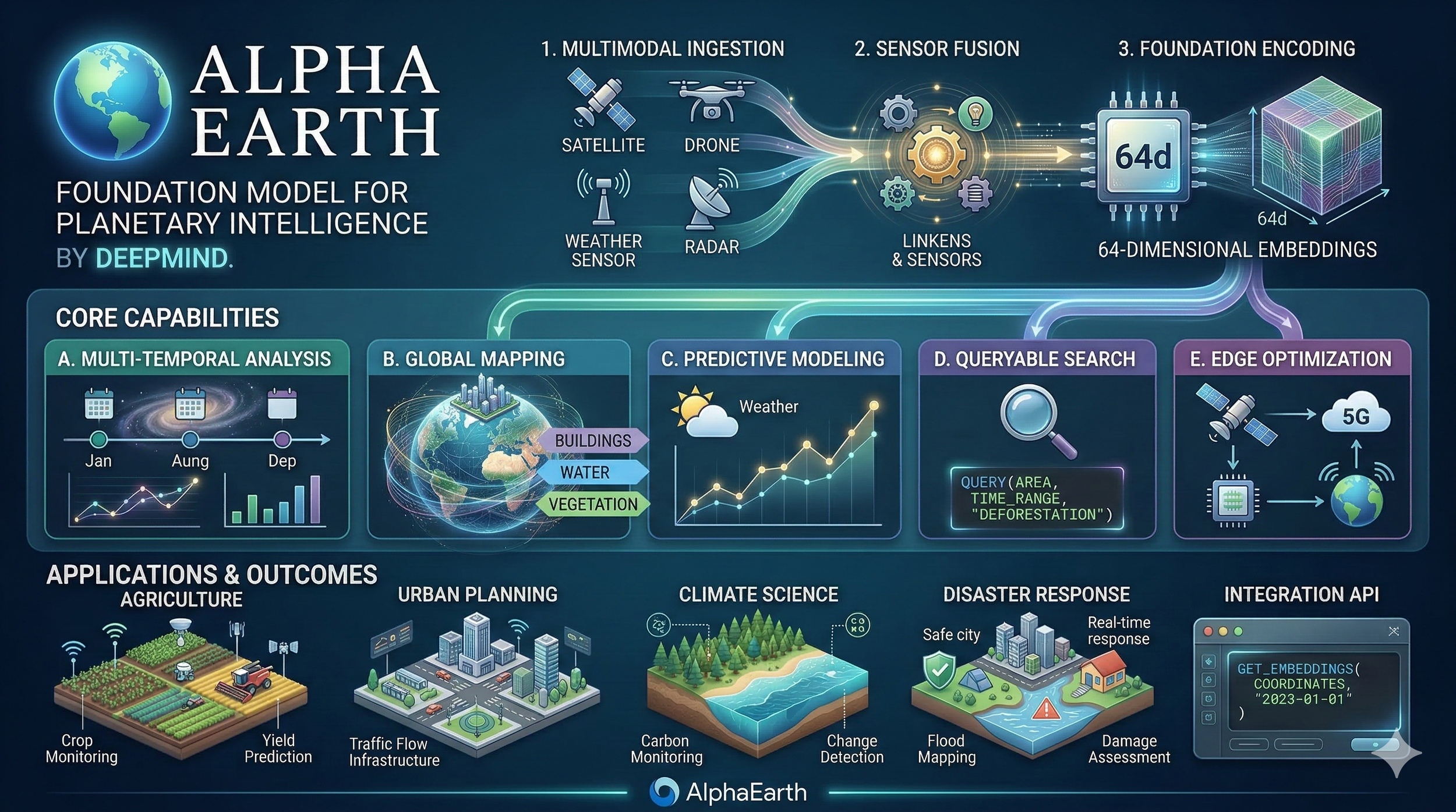

Specifically, three notable releases caught our attention. First, the open-source community delivered QGIS 4.0 "Norrköping," bringing a necessary structural update to ensure the popular desktop spatial analysis tool remains fast and reliable for everyday users. Second, Google DeepMind released the 2025 update to their AlphaEarth Foundations Satellite Embeddings, which simply makes it easier for developers to process and use complex, heavy satellite imagery. Finally, Google Earth rolled out its new Professional and Professional Advanced tiers, adding enterprise features and AI tools tailored for organizational workflows rather than just individual use.

Taken together, these three releases offer a good snapshot of what professionals are working on today: making raw planetary data easier to handle, providing better collaborative environments for decision-making, and keeping core open-source laboratories up to date.

The following sections will briefly explore these three releases, detailing what they actually do, their practical applications across various industries, and the communities behind them.

The Heartbeat of Open Source: The Human Story Behind QGIS 4.0 "Norrköping"

To truly understand the magnitude of the QGIS 4.0 release, one must first understand the soul of the open-source geospatial community. Open-source software of this scale is rarely built in sterile corporate silos; it is forged across shifting time zones, transcending language barriers, and sustained by late-night coding sessions by individuals united by a fiercely shared philosophy. The official announcement of QGIS 4.0 articulated this philosophy with poetic clarity, asserting that the community believes "empowering people with spatial decision-making tools will result in a better society for all of humanity". This core belief is the engine that drives the project to remain entirely free, actively encouraging people far and wide to harness its power regardless of their financial status or geographic location.

On March 9, 2026, a wait that had stretched for years finally came to a jubilant end. QGIS 4.0, affectionately codenamed "Norrköping" in honor of the Swedish city that hosted the vibrant 2025 QGIS User Conference, was officially released to the world. It marked the first major version update in approximately eight years, following the beloved release of QGIS 3.0 "Girona" in early 2018.

A Quarter-Century of Dedication and the Unseen Support Networks

The technical achievements of version 4.0 are staggering, but the release was accompanied by a deeply emotional community video that highlighted the profound human element humming beneath the code. The narrative of QGIS is intrinsically tied to its sheer longevity and the fierce loyalty of its global user base. In the commemorative release video, project founder Gary Sherman reflected on the humble origins of the software, his voice carrying the weight of history as he noted that the journey began twenty-four years ago in the isolated expanses of Alaska. What started as a modest tool to view spatial data has evolved into the world’s premier open-source desktop GIS, supported by a diverse ecosystem that rivals the largest software conglomerates on Earth.

The release video functioned as a global tapestry of human dedication, illustrating the software's vast, borderless reach. Voices of jubilation and relief poured in from Brazil, Indonesia, Tanzania, Bosnia, Portugal, the Czech Republic, India, Madagascar, Switzerland, and the Netherlands. Long-term users shared their personal histories alongside the software's evolution. Udwell Gandhi celebrated an astonishing twenty years working with the platform, while Kurt Mankey reminisced with nostalgia about starting his career with version 0.6 back in 2005. Arthur Nani from Brazil highlighted a painstaking, fifteen-year journey of translating the software’s endless strings into Portuguese and laboring to build a robust local community from the ground up. Marco Bernasocchi, the current chair of QGIS.org, expressed immense pride in the community, reflecting on his own massive contributions, including the creation of the mobile field-mapping counterpart, QField.

Yet, perhaps the most resonant and emotionally grounding moment of the celebration was an anonymous contributor's heartfelt message of gratitude directed not just at the developers, but at their families and support networks. Open-source development demands immense, often unseen personal sacrifice, countless weekends, holidays, and evenings spent debugging code, reviewing pull requests, or writing documentation. Publicly acknowledging the spouses, partners, and children whose patience and encouragement made this technical achievement possible brought a profound sense of human reality to a highly technical milestone.

The Agony and the Ecstasy of the Qt6 Migration

While the outward graphical user interface of QGIS 4.0 remains reassuringly familiar to its massive, established user base, the underlying architecture has undergone a seismic, necessary upheaval. The primary catalyst for the agonizing push toward version 4.0 was an unavoidable technical migration to the modern Qt6 framework.

This transition was not merely a vanity feature upgrade; it was an existential necessity for the software's survival and relevance. The previous underlying framework, Qt 5.15, is rigidly scheduled to enter its Extended Support (EOS) phase in May 2025, after which critical security patches and upstream fixes would only be made available to the public under highly restrictive commercial terms. By undertaking the herculean task of migrating to Qt6, the QGIS core development team effectively future-proofed the massive codebase, ensuring the community would maintain ongoing access to modern libraries, enhanced layout rendering, optimized PDF output generation, and vital security improvements.

However, migrating a platform as incredibly complex, historically dense, and heavily customized as QGIS is fraught with technical peril. The migration signaled a definitive break in the Application Programming Interface (API). For a platform that relies on a massive, decentralized ecosystem of third-party plugins—upon which many geospatial professionals stake their daily livelihoods—breaking the API is a terrifying prospect that risks alienating users and destroying established workflows.

The developer community navigated this treacherous transition with remarkable foresight and deep empathy for their users. Rather than forcing a hard reset that would instantly break thousands of existing workflows, the core team engineered QGIS 4.0 to deliberately retain deprecated APIs wherever technically feasible. This strategic, compassionate decision dramatically minimized the friction for independent plugin developers. It allowed them to utilize newly provided tools like the pyqgis4-checker—available via Ubuntu and Fedora Docker images—to test and update their code incrementally rather than being forced into undertaking massive, panicked rewrites. While deeply legacy APIs from the ancient 2.x era (such as older Processing APIs) are no longer guaranteed support, the transition has been hailed across industry forums as exceptionally smooth for such a major version jump.

The Crucible of the Mailing List: Why the Delay Mattered

The road to the Norrköping release was not without its agonizing hurdles and intense debates. Originally scheduled for an October 2025 release alongside the completion of the 3.44 version cycle, QGIS 4.0 was consciously and publicly delayed to February, and ultimately early March, of 2026.

This delay was the direct result of transparent, rigorous, and highly technical debate on the developer mailing lists. Key community figures, including Mathieu Pellerin and Nyall Dawson, identified that while the Continuous Integration (CI) pipelines for the Qt6 port were largely operational, a critical handful of automated tests that had passed flawlessly under Qt5 were suddenly failing or being skipped under the new Qt6 builds. Unwilling to compromise on testing coverage and overall platform stability, the community reached a hard-fought consensus to defer the release. In a defining moment of technical leadership, Dawson confirmed by September 2025 that the CI blockers (meticulously tracked under pull request #62809) had been completely resolved, meaning the release could finally move forward safely.

The delay, while frustrating to some eager users, yielded immense technical benefits. Crucially, it allowed for the perfection of platform-specific deployment challenges. Most notably, the extra time enabled the team to finalize native, notarized packages for macOS users, effectively ending years of complex, frustrating installation workarounds for the dedicated Apple user base. To avoid punishing Mac users during the delay, the community even took the extraordinary step of backporting the new notarized build process to the older QGIS 3.44 version. Furthermore, the extra months provided the broader open-source ecosystem—including critical package maintainers for Linux distributions like Debian, Fedora, and Ubuntu—the precise time necessary to engineer systems that could support Qt5 and Qt6 side-by-side.

New Horizons: Features, Stability, and the 4.x LTR Roadmap

With the historic release of 4.0, the developer community has introduced over 100 brand-new features, quietly expanding the application's immense power. Among the highly anticipated additions is an incredibly intuitive 3D cross-section tool engineered by Nyall Dawson, allowing users to effortlessly slice through complex 3D scenes by simply picking a region of interest on the 2D canvas. The community is also buzzing about the introduction of native Jupyter Notebook integration, pioneered by Qiusheng Wu, which brings the power of interactive Python data science directly into the QGIS interface. Adjacent to the core codebase, the revamped QGIS Hub is experiencing an unprecedented period of explosive growth, acting as a sleek, centralized repository for the community to share projects, customized styles, and automated scripts.

For massive enterprise and institutional users who require absolute, unwavering stability, the community has provided clear, reassuring guidance. QGIS 3.44 will serve as the final Long Term Release (LTR) of the legendary 3.x series, and its life has been extended to be fully supported until May 2026. The first official LTR of the new, modern architecture will be QGIS 4.2, carefully scheduled for release in October 2026.

Until that LTR arrives, users across the globe are encouraged to explore the Norrköping release, marvel at the newfound rendering speed and security of the Qt6 architecture, and participate in a vibrant ecosystem that proves, year after year, that open-source passion and collaboration can rival, and often exceed, any proprietary software on Earth.

| Feature | QGIS 3.x Era (Legacy) | QGIS 4.x Era (Modern) |

|---|---|---|

| Core Application Framework | Qt 5.15 (Approaching End of Support) | Qt6 (Modern, Secure, High Performance) |

| Plugin Compatibility | Manual validation by independent developers | Automated validation via pyqgis4-checker |

| macOS Deployment | Complex installations, security warnings | Native, notarized packages |

| 3D Rendering | Basic 3D canvas interaction | Advanced tools & dynamic cross-sectioning |

| LTR Focus | Version 3.44 (Support ends May 2026) | Version 4.2 (Coming Oct 2026) |

The Alchemist of Pixels: DeepMind's AlphaEarth Foundations 2025

If QGIS 4.0 represents the beautiful democratization of the spatial workbench, then Google DeepMind’s AlphaEarth Foundations represents a total, reality-bending revolution in the raw materials that are placed upon that workbench. Launched initially in July 2025 and updated comprehensively for the 2025 calendar year just last week, the AlphaEarth Foundations Satellite Embeddings represent a paradigm shift in how the physical planet is observed, quantified, modeled, and understood.

For decades, the Earth observation industry has faced a crippling, seemingly insurmountable bottleneck. Satellites from the European Commission’s Copernicus Sentinel program, the United States Geological Survey's Landsat program, and a growing constellation of commercial operators capture an astonishing, petabyte-scale volume of data every single day. This data is inherently and chaotically multimodal, consisting of multi-spectral optical imagery, thermal signatures, cloud-penetrating synthetic aperture radar (SAR), airborne LiDAR point clouds, global elevation models, and highly localized climate data.

Historically, extracting meaningful, actionable answers from this avalanche of raw data required massive computational "heavy lifting". Scientists and remote sensing experts were forced into grueling routines: downloading raw, heavy imagery, applying complex algorithms to correct for atmospheric distortion, painstakingly masking out persistent cloud cover, aligning pixels across temporal stacks, and manually engineering specific features before they could even begin applying machine learning classifiers.6 Furthermore, traditional deep learning models demanded tens of thousands of manually annotated geographic labels to function with any degree of accuracy.

AlphaEarth Foundations eliminates this bottleneck entirely, operating as a brilliant "virtual satellite" that fundamentally changes the physics and economics of geospatial analysis.

The Mathematical Magic of the 64-Dimensional Sphere

At the core of the AlphaEarth breakthrough is the beautifully abstract mathematical concept of the embedding.6 Developed by an elite team of researchers at DeepMind and Google, including Emily Schechter, Matt Hancher, Valerie Pasquarella, and Christopher F. Brown,the AlphaEarth model utilizes advanced multimodal deep learning to assimilate incredibly diverse observation streams. Instead of simply delivering raw red, green, blue, or near-infrared pixel values to the user, the artificial intelligence processes an entire year's worth of complex temporal and multimodal inputs for every single 10-meter square of the Earth's terrestrial surface and shallow waters.

The output of this massive computational effort is a 64-dimensional vector, or embedding, for every pixel on the globe.5 Conceptually, a Satellite Embedding can be thought of as a specific coordinate on the surface of an unimaginably complex 64-dimensional sphere. These 64 abstract dimensions capture subtle, highly intricate relationships between spectral signatures, physical terrain characteristics, and temporal environmental conditions throughout the calendar year.

The physical interpretability of these highly abstract dimensions is nothing short of extraordinary. A massive recent academic evaluation of AlphaEarth,analyzing 12.1 million samples across the Continental United States, tested the embeddings against 26 distinct environmental variables spanning hydrology, vegetation, temperature, and terrain. The study demonstrated conclusively that individual embedding dimensions map directly onto specific, real-world land surface properties. The embeddings reconstruct these physical variables with astonishing fidelity; for instance, temperature and elevation correlations approach an almost perfect R² value of 0.97, while 12 of the 26 variables exceeded an R² of 0.90.

This means that complex physical realities are now permanently encoded into compact, incredibly fast, analysis-ready datasets accessible directly via the Earth Engine API or Google Cloud Storage. Users no longer need deep learning expertise or supercomputers to benefit from artificial intelligence; they simply use the embeddings for pixel-based similarity searches, instant clustering, or lightweight classification workflows.

| AlphaEarth Foundation Feature | Traditional Methodology | The AlphaEarth Advantage |

|---|---|---|

| Data Representation | Raw, multi-band optical/radar pixel values | 64-dimensional semantic embeddings |

| Temporal Context | Point-in-time snapshots; manual time-series stacking | Annual summaries of year-round dynamic patterns |

| Weather Interference | High data loss; requires extensive manual cloud masking | Multimodal AI "sees through" persistent cloud cover |

| Computational Load | Demands massive compute for raw pre-processing | Analysis-ready; zero heavy inference required |

| Training Requirements | Tens of thousands of manually annotated labels | Significantly fewer labels (hundreds) required |

Real-World Alchemy: From the Amazon to Antarctica

The human, political, and environmental impact of this technology is profound, as vividly evidenced by the specific case studies emerging from the 2025 dataset release. Because the 64-dimensional embedding space is specifically designed by DeepMind to be temporally consistent, comparing the 2024 dataset vectors with the 2025 dataset vectors allows researchers to instantly isolate catastrophic environmental changes or rapid human development. This is achieved simply by calculating the mathematical angle between the annual vectors for any given location.

In practical application, this elegant math has allowed analysts to monitor subtle but geopolitically explosive hydrological shifts. For example, researchers utilized the 2025 update to identify precise, undeniable water level changes in the heavily contested Nile River behind the newly constructed Grand Ethiopian Renaissance Dam. Similarly, it has illuminated rapid infrastructure development across the globe, vividly detailing the exact construction progress of the massive Navi Mumbai International Airport over a single year.

The model's remarkable ability to seamlessly assimilate multimodal radar and optical data allows it to succeed brilliantly where traditional optical satellites fail entirely. In Ecuador, the AlphaEarth embeddings successfully bypassed the persistent, year-round cloud cover that plagues the region, managing to accurately classify agricultural plots in various stages of development—a feat previously thought impossible without boots on the ground. In Antarctica—a desolate region notoriously difficult to map due to highly irregular satellite coverage and blindingly high surface albedo—the model rendered complex surface topographies in unprecedented, unified detail.

The global scientific community has responded to the technology with overwhelming enthusiasm and a sense of deep relief. Sampath Manage, a researcher quoted in the community response, described the move to 64-dimensional embeddings as a definitive "game changer," noting that distilling petabytes of data while perfectly maintaining temporal signatures handles exactly the kind of agonizing 'heavy lifting' that has held back modern environmental science.

Carbon Markets and Canopy Heights: The Renoster Case Study

The real-world applications of AlphaEarth extend deeply into global economic frameworks, particularly in the desperate fight against climate change. John B. Kilbride, a Remote Sensing Forest Scientist at the climate technology company Renoster, has successfully integrated AlphaEarth Foundations into rigorous workflows for forest carbon offset verification.

The carbon offset market, long plagued by accusations of greenwashing, relies heavily on proving beyond a shadow of a doubt that specific forests are sequestering additional carbon through avoided deforestation, massive reforestation, or improved forest management practices. By combining AlphaEarth's temporal embeddings with highly accurate Airborne LiDAR data, Kilbride and his team can bypass the chronic headaches associated with pre-processing satellite time-series data. The result is the rapid generation of highly accurate, structurally sound models of aboveground biomass. This directly accelerates the validation of high-quality carbon credits, introducing desperately needed transparency and speed into a critical mechanism for funding global climate resilience.

Academic Rigor: FEMA, Dengue Fever, and Scalable Labels

The academic validation of AlphaEarth has been equally stunning. When AlphaEarth's landscape features are fused with other human-centric foundation models, the predictive capabilities multiply exponentially. Google researchers recently demonstrated that fusing AlphaEarth spatial embeddings with their Population Dynamics Foundations model dramatically improved the forecasting of Dengue fever outbreaks in Brazil. By combining landscape data with human movement, the explanatory accuracy (R²) of the disease vector model leaped from 0.456 to an impressive 0.656.

Similarly, disaster predictions for the United States FEMA National Risk Index improved by an average of 11% across 20 distinct hazards when utilizing AlphaEarth embeddings, with massive, life-saving gains in predicting the risks of unpredictable tornadoes (+25% R²) and devastating riverine flooding (+17% R²).

Furthermore, researchers are using AlphaEarth to solve the crisis of missing geographical labels. A study led by Luc Houriez successfully utilized the embeddings to extend LANDFIRE's Existing Vegetation Type (EVT) dataset beyond the borders of the USA and deeply into Canada. By leveraging the fundamental physical truths encoded in the embeddings, even basic models like random forests achieved a staggering 81% classification accuracy in extending the physical map across international borders, vastly outperforming traditional methods.

AlphaEarth Foundations is not just a new map; it is a continuously updating, living mathematical translation of the planet's physical state. It dramatically lowers the barrier to entry for scientists worldwide, allowing underfunded organizations to tackle food security, track illegal deforestation, and plan for climate resilience with 24% lower error rates than previous state-of-the-art models, even when their labeled training data is exceedingly scarce.

The Enterprise Canvas: Google Earth 2026

If AlphaEarth provides the raw mathematical truth of the planet, Google Earth has now decisively evolved to be the ultimate enterprise canvas upon which that truth is interpreted, debated, and acted upon. Since its awe-inspiring origins as Keyhole EarthViewer over two decades ago, Google Earth has captivated the public imagination, serving as an inspirational, almost magical tool for geographic exploration, classroom education, and digital curiosity.8 However, in 2026, the platform has firmly and decisively shed its purely consumer-focused identity to become a formidable powerhouse of enterprise spatial computing.

Led by Senior Product Manager Brian Ho and Product Manager Patrik Blohmé, the vision for Google Earth 2026 is sweeping and uncompromising: to transform the beloved software into a definitive "source of truth for critical geospatial decisions". The strategic goal is no longer just exploration; it is to empower civil engineers, urban planners, real estate developers, and corporate sustainability leaders to seamlessly merge their own private intelligence with Google's massive global digital twin.

The Architecture of Enterprise Spatial Computing

To execute this ambitious corporate vision, Google Earth has been officially folded into the Google Maps Platform family and segmented into highly capable, monetized Professional tiers. While the Standard tier thankfully remains free—preserving the legacy of democratized geographic exploration for students and hobbyists—the newly introduced Professional and Professional Advanced plans unlock a staggering array of domain-specific data and immense project scalability.

For professionals working in Architecture, Engineering, and Construction (AEC), the primary friction of spatial planning often lies in the agonizing slowness of data acquisition. Gathering municipal zoning boundaries, calculating historical surface temperatures, or mapping out elevation topologies for a new development can take days of manual labor and require navigating labyrinthine government portals.7 The Professional plans bypass this entirely by injecting curated, high-resolution, AI-powered data layers directly into the user's workflow.

Google Earth 2026 Service Tiers

Professional Planning & Geospatial Limits

| Service Tier | Monthly Pricing | Features & Data Layers | Design & Data Limits |

|---|---|---|---|

| Standard | $0 /mo |

|

100 designs/mo 1 GB Import |

| Professional | $75 /mo |

|

500 designs/mo 10 GB Import |

| Professional Advanced | $150 /mo |

|

1,000 designs/mo 20 GB Import |

These specialized layers are transformative for early-stage site analysis and risk assessment. Consider an urban planner assessing a sprawling neighborhood for a new solar microgrid. In the past, this required hiring external survey consultants to map solar potential. Today, the Advanced tier provides immediate, localized access to rooftop reflectivity indices, local tree canopy occlusion data, and precise 20-meter topographic contours. The design constraints scale massively, allowing clean energy firms to rapidly prototype up to 1,000 localized solar designs per month on vast sites spanning 100 acres, complete with automated municipal tax lot selections.

Community Friction and the Economics of Convenience

The introduction of paid subscription tiers to a platform that has been traditionally celebrated for being free inevitably sparked friction. Across spatial technology forums and platforms like Reddit, the reaction from the broader GIS community has been intensely mixed, perfectly reflecting the growing pains of a rapidly maturing spatial ecosystem.

Some hobbyists and early-stage users expressed vocal frustration, cynically noting that some of the newly paywalled data layers are essentially just publicly accessible KML files repackaged behind a subscription. However, seasoned industry professionals have quickly pushed back, recognizing the immense, undeniable value proposition of the service. As one community moderator correctly and bluntly summarized, hosting, curating, and maintaining enterprise-grade web services at a global scale is an exceptionally expensive endeavor.

The true financial value of the Professional tiers lies not just in the raw data itself, but in the miraculous optimization of the workflow. Google Earth completely eliminates the tedious, soul-crushing process of searching for obscure municipal databases, cleaning broken shapefiles, and resolving maddening projection errors. It provides a frictionless, beautifully rendered cloud-based collaborative environment where globally distributed teams can seamlessly drag and drop GeoJSON files, align on a single visual source of truth, and present high-resolution, interactive 3D visualizations directly to non-technical stakeholders and corporate boards. For a commercial real estate firm or a major infrastructure developer, $150 a month is a phenomenally negligible price to pay for tools that actively accelerate site selection and risk assessment by weeks.

Gemini and the Dawn of Agentic Geospatial Intelligence

Beyond massive data aggregation, the most profound and futuristic addition to the Google Earth ecosystem is the deep integration of Generative AI via Google's Gemini models. This integration officially introduces what industry leaders are calling the era of "agentic geospatial intelligence".

Traditionally, interrogating a GIS platform required an intimate, highly technical understanding of SQL queries, spatial operators, and highly specific, clunky interface tools. It was a discipline reserved solely for trained analysts. With the introduction of the new "Ask Google Earth" feature, that barrier to entry has been obliterated. Users can now interrogate massive catalogs of satellite imagery and localized spatial data using simple, natural, conversational language.

This breathtaking capability is powered by a new underlying framework termed Geospatial Reasoning. Geospatial Reasoning allows the AI agent to automatically connect disparate, previously siloed Earth AI models. A user can ask a complex, multi-variable question, and the AI will autonomously handle the "heavy lifting of data wrangling," instantly fusing weather forecasts, demographic population maps, and optical satellite imagery to deliver a concrete, actionable answer rather than just a pretty visualization.

The humanitarian implications of this framework are already being felt in the field. The non-profit organization GiveDirectly has utilized Geospatial Reasoning to absolutely revolutionize their disaster response protocols.38 By asking the AI to cross-reference real-time flood inundation models with highly localized population density data, the organization can immediately identify which specific vulnerable communities require direct financial aid and prioritize their resource distribution accordingly, saving lives in the critical hours following a catastrophe.

The AI does not merely retrieve historical information; it actively anticipates future change. This generative capacity frees spatial analysts from the mundane, repetitive mechanics of data preparation. It elevates their roles entirely, ensuring that highly trained human minds can focus entirely on high-level strategy, nuanced environmental interpretation, and creative, empathetic urban problem-solving.

The Maturation of Spatial Intelligence

When observing QGIS 4.0, AlphaEarth Foundations, and Google Earth Professional together, a clear pattern emerges: the geospatial industry is increasingly focused on interoperability and practical application.

The shift from static 2D maps toward dynamic, frequently updated models, often referred to as digital twins—is becoming a standard practice rather than a futuristic concept. As utilities, government agencies, and climate scientists handle growing volumes of sensor data, tools that support predictive analytics and continuous condition monitoring are simply becoming standard requirements for managing infrastructure risk and adapting to environmental changes.

Industry leaders are echoing this practical shift. In a recent keynote address at the GeoBuiz Summit 2026, Damian Spangrud, a Director at Esri, articulated how spatial computing is integrating into the modern enterprise. He emphasized that location has become a unifying framework that bridges legacy IT databases, real-time field operations, and economic modeling. Digital twins, Spangrud noted, are trusted systems of record that drive genuine business value and ensure regulatory compliance. Similarly, Stephanie Dockstader, an Esri product manager speaking at GeoWeek, highlighted that the main hurdles for organizations today are often just a lack of enterprise vision and understanding of what modern spatial data can efficiently achieve. The developments of this past week directly address those hurdles.

A Practical Ecosystem

Within this evolving ecosystem, DeepMind's AlphaEarth serves as a streamlined foundational data layer. By updating its 64-dimensional mathematical embeddings, it offers a more accessible way to track carbon stocks, monitor hydrological shifts, and expose environmental variables via an API.

Google Earth builds on this by providing a collaborative interface. Its Professional tiers allow corporate decision-makers, urban planners, and crisis responders to synthesize complex variables and visualize them simply on an intuitive 3D globe.

Meanwhile, QGIS 4.0 Norrköping remains the essential, flexible workbench. For academic researchers extending AI models, forestry scientists running customized LiDAR regressions, and local municipalities planning their cities, QGIS is indispensable. Its modernized Qt6 architecture ensures that open-source tools remain reliable as computational demands naturally increase.

The developments of early 2026 underscore a broader trend: spatial intelligence is becoming seamlessly integrated into everyday professional workflows. The heavy computational lifting is being optimized, data is becoming more accessible, user interfaces are increasingly conversational, and core open-source infrastructure has been modernized for longevity. For the professionals designing resilient cities, monitoring the biosphere, and striving for a safer society, these tools simply offer a more capable and efficient means to get the job done. The map continues its steady evolution from a static reference into an active, practical instrument.

-

Geospatial Trends to Watch in 2026 | GeoAI, Digital Twins & LiDAR - SurvTech Solutions, accessed March 16, 2026, https://www.survtechsolutions.com/post/geospatial-trends-2026

GeoBuiz Summit 2026 Keynote | Spatial Computing, Digital Twins & the Future of Enterprise GIS - YouTube, accessed March 16, 2026, https://www.youtube.com/watch?v=Yz2d7saZcSc

QGIS 4.0 Norrköping is released! – QGIS.org blog, accessed March 16, 2026, https://blog.qgis.org/2026/03/09/qgis-4-0-norrkoping-is-released/

Planet OSGeo, accessed March 16, 2026, https://planet.osgeo.org/

AlphaEarth Foundations Satellite Embeddings: A Look at Our Planet in 2025 - Medium, accessed March 16, 2026, https://medium.com/google-earth/alphaearth-foundations-satellite-embeddings-a-look-at-our-planet-in-2025-f23349370399

AI-powered pixels: Introducing Google's Satellite Embedding dataset - Medium, accessed March 16, 2026, https://medium.com/google-earth/ai-powered-pixels-introducing-googles-satellite-embedding-dataset-31744c1f4650

What's coming to Google Earth in 2026 | by Google Earth | Google ..., accessed March 16, 2026, https://medium.com/google-earth/from-the-product-lead-whats-coming-to-google-earth-in-2026-3bf87320dfb9

A whole new way to work #onEarth: Introducing Google Earth's new professional plans, accessed March 16, 2026, https://medium.com/google-earth/a-whole-new-way-to-work-onearth-introducing-google-earths-new-professional-plans-f80c20b944c9

Welcome QGIS 4.0 - community release video - YouTube, accessed March 16, 2026, https://www.youtube.com/watch?v=SQJZm5Y0EKs

QGIS 4.0 "Norrköping" Released: Key Updates, Qt6 Migration, and Upgrade Guide, accessed March 16, 2026, https://geo.malagis.com/qgis-4-norrkping-released-key-updates-qt6-migration-and-upgrade-guide.html

Changelog for QGIS 4.0 · QGIS Web Site, accessed March 16, 2026, https://qgis.org/project/visual-changelogs/visualchangelog40/

QGIS Feature Frenzy :: FOSS4G 2025 - pretalx - OSGeo, accessed March 16, 2026, https://talks.osgeo.org/foss4g-2025/talk/9KDXDY/

QGIS.org blog, accessed March 16, 2026, https://blog.qgis.org/

Changes Ahead: QGIS Is Moving to Qt6 and Launching QGIS 4.0! – QGIS.org blog, accessed March 16, 2026, https://blog.qgis.org/2025/04/17/qgis-is-moving-to-qt6-and-launching-qgis-4-0/

Update on QGIS 4.0 Release Schedule and LTR Plans, accessed March 16, 2026, https://blog.qgis.org/2025/10/07/update-on-qgis-4-0-release-schedule-and-ltr-plans/

Why QGIS is so ugly? : r/gis - Reddit, accessed March 16, 2026, https://www.reddit.com/r/gis/comments/1oubut4/why_qgis_is_so_ugly/

QGIS 4.0 is gonna drop in less than 4 days! - Reddit, accessed March 16, 2026, https://www.reddit.com/r/QGIS/comments/1risb6h/qgis_40_is_gonna_drop_in_less_than_4_days/

John B. Kilbride - Google Scholar, accessed March 16, 2026, https://scholar.google.com/citations?user=WBeNszAAAAAJ&hl=en

Changelog for QGIS 3.44 · QGIS Web Site - QGIS.org, accessed March 16, 2026, https://qgis.org/project/visual-changelogs/visualchangelog344/

Posts by Anita Graser - QGIS Planet, accessed March 16, 2026, https://planet.qgis.org/subscribers/anita_graser/

Free and Open Source GIS Ramblings | written by Anita Graser aka Underdark, accessed March 16, 2026, https://anitagraser.com/

AlphaEarth Foundations helps map our planet in unprecedented detail - Google DeepMind, accessed March 16, 2026, https://deepmind.google/blog/alphaearth-foundations-helps-map-our-planet-in-unprecedented-detail/

GeoAI in Earth Engine: AlphaEarth Foundations and the Satellite Embedding dataset, accessed March 16, 2026, https://www.youtube.com/watch?v=oTqb378w14E

AlphaEarth Foundations: An embedding field model for accurate and efficient global mapping from sparse label data - arXiv, accessed March 16, 2026, https://arxiv.org/html/2507.22291v1

From Imagery to Insight: Google AlphaEarth Foundations in CARTO, accessed March 16, 2026, https://carto.com/blog/google-alphaearth-foundations-in-carto

[2602.10354] Physically Interpretable AlphaEarth Foundation Model Embeddings Enable LLM-Based Land Surface Intelligence - arXiv, accessed March 16, 2026, https://arxiv.org/abs/2602.10354

Improved forest carbon estimation with AlphaEarth Foundations and Airborne LiDAR data | by Google Earth - Medium, accessed March 16, 2026, https://medium.com/google-earth/improved-forest-carbon-estimation-with-alphaearth-foundations-and-airborne-lidar-data-af2d93e94c55

Google Earth AI: Unlocking geospatial insights with foundation models and cross-modal reasoning, accessed March 16, 2026, https://research.google/blog/google-earth-ai-unlocking-geospatial-insights-with-foundation-models-and-cross-modal-reasoning/

[2508.11739] Scalable Geospatial Data Generation Using AlphaEarth Foundations Model, accessed March 16, 2026, https://arxiv.org/abs/2508.11739

Learn about Google Earth plans, accessed March 16, 2026, https://developers.google.com/maps/documentation/earth/earth-plans

Stephanie Dockstader on Digital Twins and the Future of Geospatial Technology, accessed March 16, 2026, https://www.geoweeknews.com/news/stephanie-dockstader-on-digital-twins-and-the-future-of-geospatial-technology

New Year, New Google Earth: Major Upgrades for Faster, Sustainable, Data-Driven Decisions in 2026 - Medium, accessed March 16, 2026, https://medium.com/google-earth/new-year-new-google-earth-major-upgrades-for-faster-sustainable-data-driven-decisions-in-2026-e6a835d8cb30

Google Earth Plans - Google Maps Platform, accessed March 16, 2026, https://mapsplatform.google.com/maps-products/earth/plans/

why in the world is paid premium google earth a thing : r/Anticonsumption - Reddit, accessed March 16, 2026, https://www.reddit.com/r/Anticonsumption/comments/1p7rbrt/why_in_the_world_is_paid_premium_google_earth_a/

Thoughts on Google Earth as a GIS program? - Reddit, accessed March 16, 2026, https://www.reddit.com/r/gis/comments/1qw6dbw/thoughts_on_google_earth_as_a_gis_program/

Will Google Earth Engine be essentially useless for free tier users? : r/gis - Reddit, accessed March 16, 2026, https://www.reddit.com/r/gis/comments/1rqq308/will_google_earth_engine_be_essentially_useless/

Google Earth Pro Reviews 2026: Details, Pricing, & Features | G2, accessed March 16, 2026, https://www.g2.com/products/google-earth-pro/reviews

New updates and more access to Google Earth AI, accessed March 16, 2026, https://blog.google/innovation-and-ai/technology/research/new-updates-and-more-access-to-google-earth-ai/